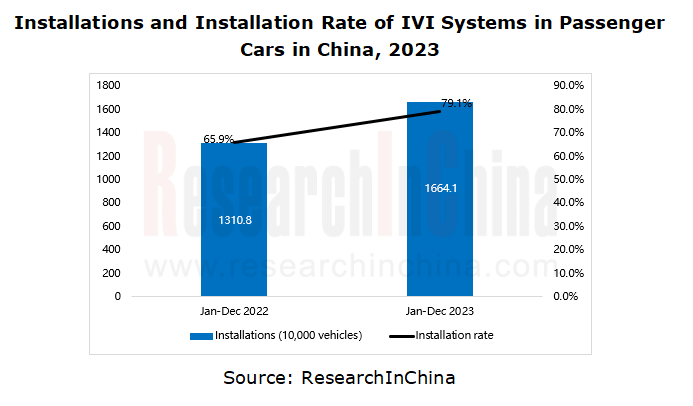

In 2023, 16.641 million passenger cars in China were installed with IVI systems, jumping by 27.0% year on year, with the installation rate up to 79.1%, up 13.2 percentage points. As the installations increases, the industry takes a qualitative leap forwards after quantitative changes. Amid the development of IVI systems, how to accurately meet users’ individual needs and preferences, create exclusive cockpit experiences and realize personalization has been the bottleneck needing to be broken to boost the industry.

1. IVI-smartphone integration upgrade brings more innovative cockpit personalized experience.

Mobile phones, which are most closely related to people’s lives and work, collect and record massive behavioral data of users, and boast rich ecological resources, having already been personalized. Automakers move user habits and ecosystems from mobile phones to cars by way of IVI-smartphone integration, and extend intelligent and personalized services to the automotive field.

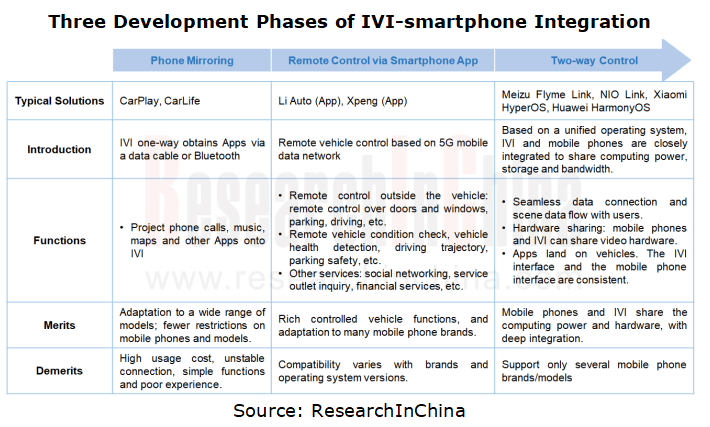

IVI-smartphone integration passes three phases:

In the first phase, automakers use a data cable or Bluetooth to enable IVI to one-way obtain smartphone Apps through phone mirroring modes such as CarPlay and CarLife, and project phone calls, music, maps and other applications to the IVI interface.

In the second phase, after entering the era of connected vehicles major OEMs have launched their own mobile phone Apps and has provided diverse functions such as remote vehicle control, remote vehicle check and car owner community interaction, further enhancing the vehicle-smartphone interconnect experience.

In the third phase, with the in-depth development of automotive connectivity, some automakers have begun to explore the deep integration between mobile phones and the underlying operating system of IVI. Based on a unified operating system, IVI and mobile phones are closely integrated to share computing power, storage and bandwidth resources and enable such functions as seamless data connection, “scenario data flow with users” and hardware sharing, thus greatly improving the intelligence level of vehicles and user experience.

In this new mode of IVI-smartphone integration, the phone App ecosystem provides a big supplement to the IVI ecosystem, and effectively helps automakers control the excessive growth in the cockpit ecosystem construction cost. Also the high performance of high-end mobile phone chips meets automakers’ increasing requirements for the computing power of next-generation cockpits.

So far, quite a few companies have applied IVI-smartphone integration technology to actual models. Examples include the integration between AITO and Huawei mobile phones, between Lynk & Co 08 and Meizu 20, between NIO and NIO Phone, and between the upcoming Xiaomi SU7 and Xiaomi mobile phones, all of which show the practical application results of IVI-smartphone integration technology. In addition, Polestar China and Xingji Meizu announced that they will jointly develop Polestar OS and launch Polestar mobile phones.

Case 1: Based on the cockpit system “Flyme auto”, Xingji Meizu has integrated the IVI ecosystem with the smartphone ecosystem.

In March 2023, Xingji Meizu put forward a brand-new concept of “mobile phone domain” and released the first “Auto phone” Meizu 20 Flagship and the intelligent cockpit system Flyme Auto. The mobile phone operating system Flyme 10 and the IVI-smartphone integration system Flyme Link allow Meizu Flyme Auto to enable ecosystem synchronization, hardware sharing and OTA between mobile phones and cars.

Flyme Auto cockpit system features small window mode, unconscious connection, unbounded desktop, what you see is what you can say across terminals, hardware sharing, smartphone-assisted OTA, application relay, gesture interaction, etc.

Unbounded desktop: When the user enters the car with a Meizu phone, the phone will automatically synchronize the App being used to the IVI, so that IVI and phone interfaces stay the same.

Hardware sharing: Mobile phones and IVI can share video hardware such as cameras and microphones. For example, when holding a video conference, the user can call the camera and microphone in the car with one click, and can also share the traffic between the phone and IVI.

Phone-assisted OTA: Users can download the upgrade firmware of the IVI to their mobile phones first. The next time they get in the car, the Flyme Link can directly push the firmware to the IVI for update.

Case 2: NIO released “NIO Link” technology to realize seamless smartphone-IVI integration.

In September 2023, NIO officially released NIO Phone. NIO Link, NIO’s all-scenario interconnect technology is used for smartphone-car integration. This technology can offer the following functions:

Vehicle control: The vehicle control key of NIO Link is installed on the left side of NIO Phone, with 30 IVI-phone integration functions, including remote vehicle control (vehicle unlock/lock, window lowering/lifting, remote air conditioning adjustment, etc.), near-field vehicle control (within the range close to the car, functions like unlock and summon can be enabled even without a signal), and in-vehicle control (automatically recognize the seat where the user is located to control functions, e.g., seat, air conditioning and sunroof).

Seamless flow: NIO Phone’s navigation clipboard relay can synchronize navigation information with one click, and allows for safe synchronization of the text copied and the recognized location information extracted on the mobile phone to the IVI. In addition, users can view the photo albums on their mobile phones in real time.

Hardware sharing: In the conference scenario, the mobile conference can be automatically synchronized to the IVI after the user gets on the car. In the game scenario, NIO Phone can be used as a gamepad to play games on the center console screen, and to call the in-cabin audio system.

2. Generative AI helps automakers create proactive, anthropomorphic and natural cockpit services.

Supported by machine learning models and deep learning technology, generative AI deeply analyzes the potential modes in historical data, thus independently creating new contents. In recent years, many automakers have introduced AI foundation models into their vehicles. Based on AI technology, they accurately grasp users’ driving habits, preferences and needs, forge personalized cockpit experiences for users, and enable intelligent music recommendation, navigation, vehicle performance settings and other functions. In addition, they use driving learning modes to predict and actively meet user needs to ensure driving safety and comfort.

Case 1: Galaxy E8 launched “AI Rhythm”, a function which can accurately recognize the keywords in song titles/lyrics and automatically generate pictures.

“AI Rhythm” launched with Galaxy E8 uses AI technology to generate pictures automatically and accurately according to the keywords in song titles and lyrics, and skillfully integrate dynamic effects into them, bringing users immersive music experience. Noticeably this function not only supports the recognition of Chinese songs, but also can easily deal with English songs, showing great cross-language processing capabilities.

Case 2: Based on Mind GPT, Li Auto introduced intelligent navigation, mobility assistant and other functions.

Li Auto built the base model of Mind GPT. At the core of the model is the self-developed TaskFormer neural network architecture. Based on multiple practical scenarios such as driving, entertainment and mobility, Li Auto uses advanced technologies such as SFT and RLHF for fine training, equipping Mind GPT with capabilities of understanding, generation, knowledge memory and reasoning.

Intelligent navigation: Mind GPT can provide accurate navigation services according to the driver’s voice instructions and map data. Moreover it can also plan the optimal route for the driver according to real-time road conditions and traffic information.

Mobility assistant: Mind GPT inspires users to travel, plans itineraries, and searches for good places to eat, drink and have fun in the newly added Meituan App. The recommended places and routes can be called directly in the navigation. On the trip, Lixiang Tongxue can also introduce the historical and cultural stories of scenic spots along the way to users.

Case 3: Mercedes-Benz’s new MBUX virtual assistant will integrate ChatGPT.

At CES 2024, Mercedes-Benz exhibited its MBUX Virtual Assistant integrated with ChatGPT. Running on the new Mercedes-Benz Operating System (MB.OS), MBUX Virtual Assistant uses generative AI and proactive intelligence, and has four ‘personality traits’: Natural, Predictive, Personal and Empathetic. Based on the large language model, MBUX Virtual Assistant provides more natural, more humanized and more personalized voice interaction. Coupled with 3D graphics technology, its virtual avatar can express different moods and states of being, with emotions ranging from calm to excitement.

It provides “intimate advices” in line with user habits and scenarios: for example, playing the latest news in the morning, helping to reserve a parking space in advance based on the driver’s daily route, or arranging calendar events based on traffic conditions.

The four “personality traits” (Natural, Predictive, Personal and Empathetic) of the virtual assistant make the conversation more natural and smoother. The customer can choose to speak with the assistant with or without using the keyword ‘Hey Mercedes’. It can answer questions directly and obtain more accurate information through “reflective questions”.

3. Automakers open more edit permissions to users via co-creation platforms.

The development of software-defined vehicles and EEAs makes OEMs’ co-creation platforms a possibility. Automakers open more custom edit permissions to users though co-creation platforms, so that users can create personalized exclusive cars as they like and need, thus enjoying personalized services.

Case 1: GAC launched “Automotive Sound Effects Co-creation Platform” and “ADiGO MAGIC”

In December 2022, GAC launched the “Automotive Sound Effects Co-creation Platform”, allowing users to upload sound effects and be certified as a sound engineer. Every user could share his/her unique auditory taste.

In June 2023, GAC unveiled the “ADiGO MAGIC”, a co-creation platform that opens 2,000+ vehicle atomic services for users to edit and combine them freely, thus defining personalized products unlimited to scenarios. In addition, ADiGO MAGIC can not only meet consumers’ demand for personalized functional services, but also serve developers, aiming at solving tricky problems such as complicated processes, high thresholds and slow verification in automotive software development, thus promoting the innovative development of the automotive industry.

Case 2: Yangwang U9 supports custom programming.

In February 2024, Yangwang U9, the second model of the Yangwang brand, officially debuted. This model provides users with a large number of customizable modules, covering doors, lights, seats, and U9’s unique E4 and DiSus-X. All the functions of the car can be flexibly called by codes. Therefore, Yangwang U9 can flexibly call various vehicle functions through programming, realizing the body actions like “dancing to the beat”. In future, Yangwang will work together with artists from all walks of life and launch more interesting limited “optional” functions. It will also open the programming authority of DiSus-X, movable parts, sound and photoelectric functions, and create a private and exclusive “sense of ritual” together with users.